How do we update hundreds of Ghost's websites on Docker Swarm?

Our clients care about their Ghost updates. In this post, we will reveal how we update for all Ghost sites. Under the hood, many updates happen all the time. We maintain our own Ghost's docker image. Check it out on our GitHub release page.

At high level, we manage the backend for our clients. Our whole cluster runs on top of the public cloud, Linux OS, Docker Swarm, orchestrated services (containers), CI/CD, zero-downtime deployments and many other DevOps best-practices.

DevOps best practices are essential to us. Many checkpoints ensure our Ghost sites run smoothly.

Ghost updates

Now let's talk about the way we update Ghost for our clients. We usually wait 1-2 weeks before applying updates to our Ghost sites (and all our software packages really).

Here is an example showing why we don't automatically update Ghost as soon as it's released. This way, as a community, we can catch any emergency bugs that could emerge.

CI/CD

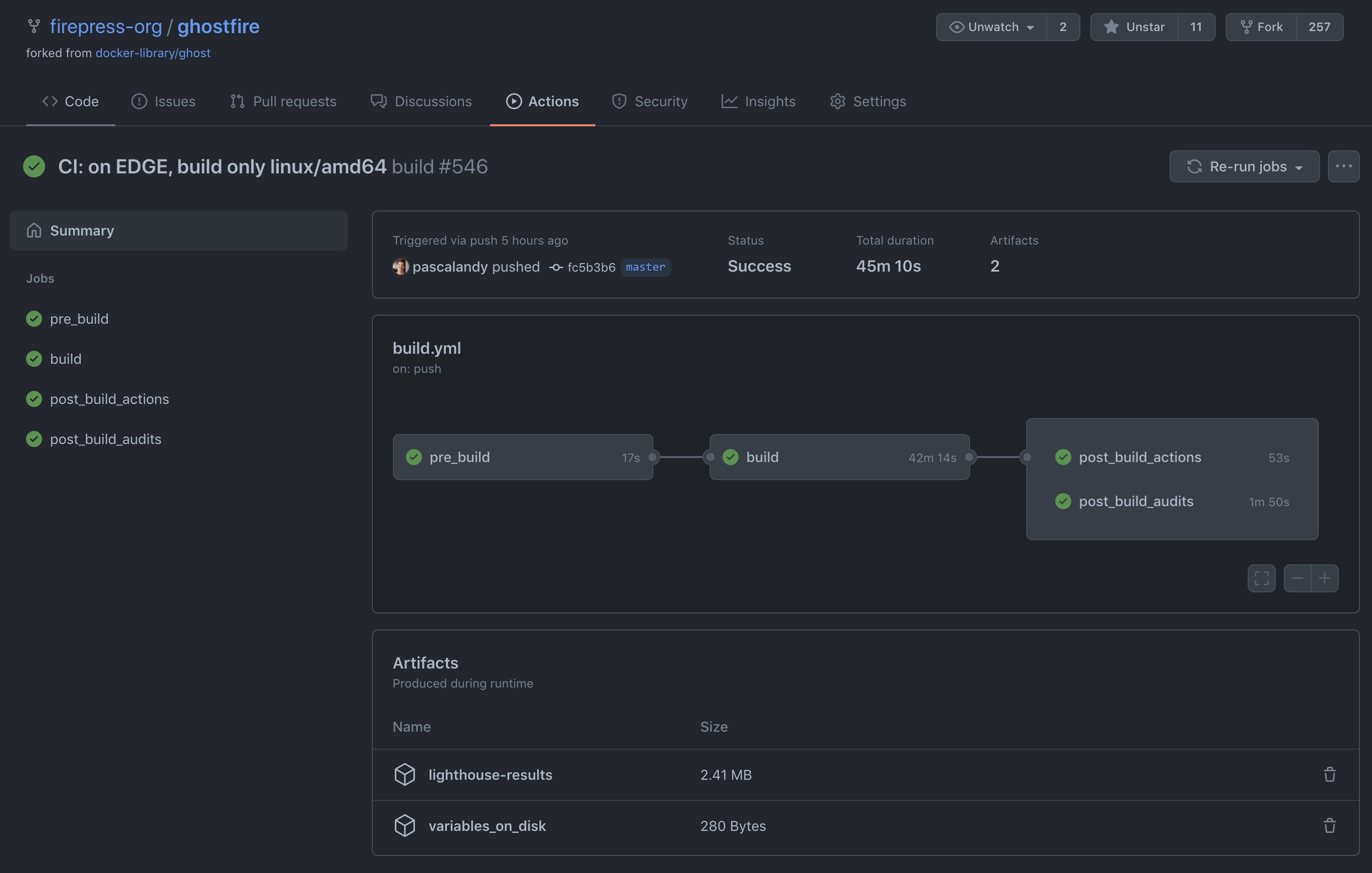

We take Continuous Integration and Continuous Deployment very seriously at FirePress. Only if you are curious to see what is happening with our Ghost builds, you can follow our Ghost builds here:

github.com/firepress-org/ghostfire/actions/workflows/build.yml

The master branch is probably the one you want to watch as it's the build your website will run on.

Best practices

This is how we carefully push every one of the Ghost updates. We use three kinds of tags:

- site edge

- site stable

- site stable-hash (client's site)

It goes like this:

- We use a repeatable Dockerfile declaration that executes everything that needs to happen to have a perfect installation of the software. No manual mistake can happen here as the Dockerfile contains the OS, libraries and the Ghost app.

- A commit happens via Github. A webhook builds the docker image edge using Travis in a CI (continuous integration) system using the edge branch.

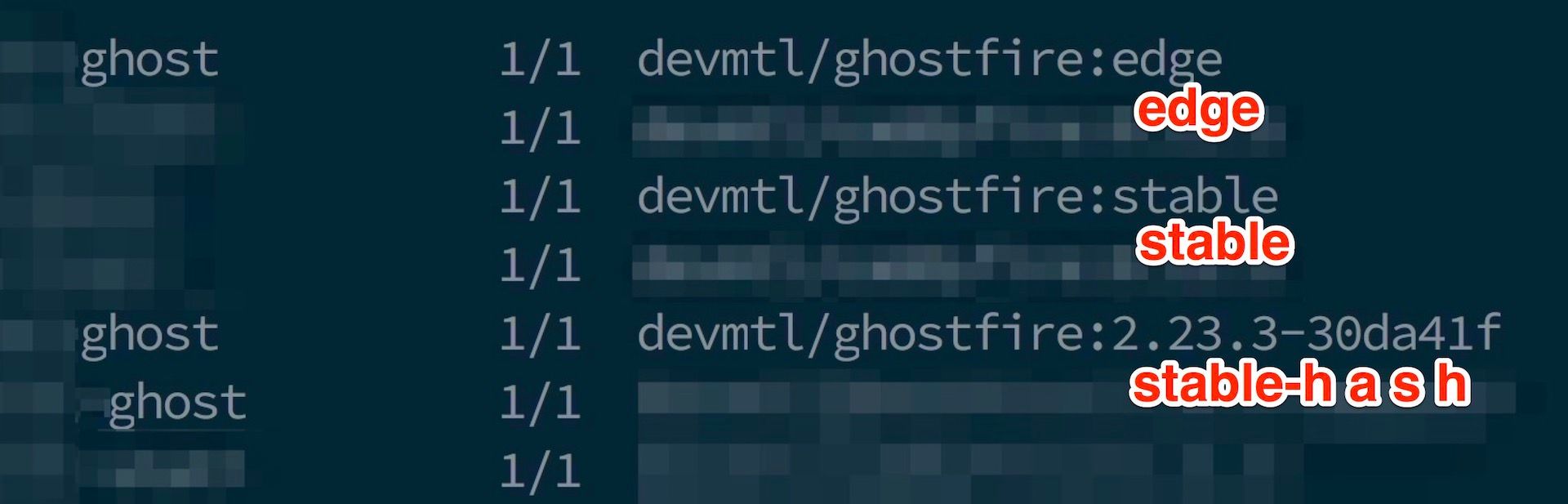

- Within the CI, the system executes many tests. You can see one of them here. When the build is successful, the system tag this docker image as devmtl/ghostfire:edge. If something fails, everything stops at this point, and we receive an email saying something has failed.

- In our PROD environment, our CD (continuous deployment) checks every minute if a new edge image is available on the Dockerhub. This is almost magic! This applies only for the site edgetest.firepress.org (fake site, this is only to help you understand the concept)

- We manually surf on edgetest.firepress.org . If we can navigate the site normally we consider the docker image edge as "passed. In the future, we might automate this part as well. Follow this issue for all details.

- A commit happens via Github. A webhook builds the docker image stable using Travis in a CI (continuous integration) system using the stable branch.

- Within the CI, the system executes many tests. You can see one of them here. When the build is successful, the system tag two specific tags: devmtl/ghostfire:stable and devmtl/ghostfire:stable-hash. If something fails, everything stops at this point, and we receive an email saying something has failed.

- In our PROD environment, our CD (continuous deployment) checks every minute if a new stable image is available on Docker hub. It is still magical! This applies only for the site edgestable.firepress.org (fake site, this is only to help to reader understand the concept)

- We manually surf on stabletest.firepress.org . If we can navigate the site normally we consider the docker image stable as "passed. In the future, we might automate this part as well. Follow this issue for all details.

Now all our tests are passed!

- We are now ready to update all our client's sites using the Docker image devmtl/ghostfire:stable-hash.

- We need to understand that the tags devmtl/ghostfire:stable and devmtl/ghostfire:stable-hash are actually the same docker image. This make our CD process so easy.

- We update a configuration that specifies a tag (devmtl/ghostfire:stable-hash) that every site must run on. For a real-world example: see at the end of this log file and you will find:

devmtl/ghostfire:2.23.3-30da41f - We commit the fact that every Ghost site must now run

devmtl/ghostfire:2.23.3-30da41f - We launch the command which is a rolling update from the previous docker image to the newest one (

devmtl/ghostfire:2.23.3-30da41f). This means zero downtime. Not even one second down. - If something is failing, it's probably not related to the docker container. It might be a network issue, a proxy issue, a load balancer issue, or something else.

Below, is a screenshot of three sites running in our cluster:

Our cluster

- There is no staging cluster. The staging is done in Github Action CI. We only have "staging sites" (edge, stable) along with all other sites we manage in the cluster.

- Our main cluster lives in New-York on top of one of the big Cloud providers.

- In the future, we might run two clusters in two regions. Per example, one in New-York and the other in Amsterdam. This will depend on our client's location. Fortunately, this is not a challenge at the moment as our sites run behind a powerful CDN powered by Cloudflare.

Conclusion

You can see how we do everything we can to avoid human errors and to ensure that you are always running a fresh build.

It's good to understand that we never update the existing docker image. We always build a new one from scratch. This way, all patches and security fixes are applied onto your Ghost site during every update.

Cheers!

Pascal — FirePress Team 🔥📰